A Lakestream is a new concept I’ve seen pop up. I think it’s legitimately gonna define the next few years of event streaming, so let’s unpack the buzzword:

1) The Lake 🐟

In 2026, the dominant data lake trend is the so-called data lakehouse - basically an open-table format (like Iceberg / Delta Lake) with Parquet files on S3.1

A quick recap of the terms:

A data lake is an immutable file collection of structured and unstructured data (e.g PDFs, videos).

A data warehouse is a structured, mutable tabular representation of data.

A data lakehouse is a mix of both - a mutable table abstraction on top of (immutable) structured files in a lake.

Lakehouses decouple storage from compute by defining the storage format and forcing you to bring your own compute engine.

The result is a zero-copy architecture where only a single copy of your data exists:

sample high-level architecture (missing a catalog, but whatever)

People prefer lakehouses when they:

2) The Stream 🌊

In 2026, the dominant Kafka trend is dumping everything to object storage (S3).

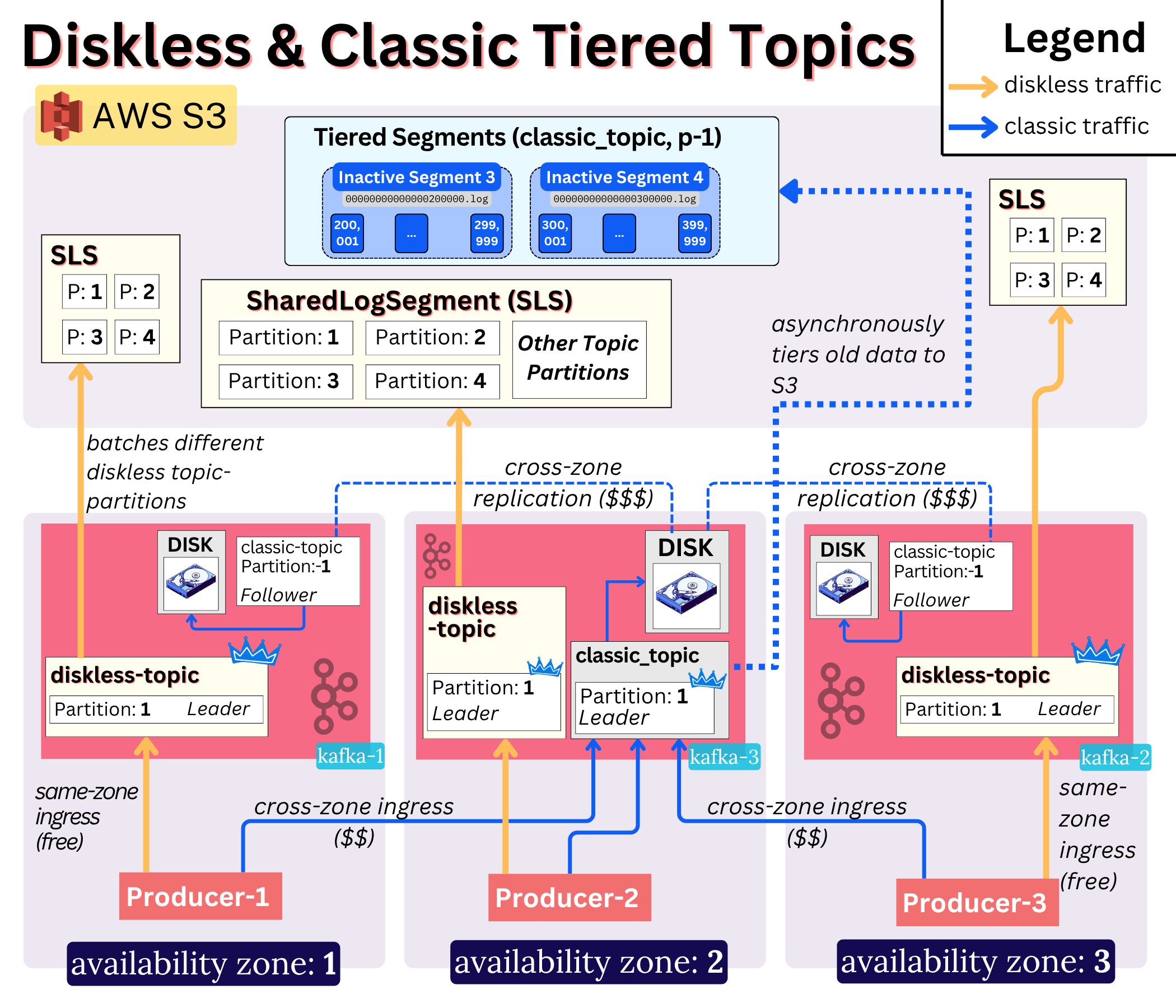

a visualization of the diskless (“direct-to-s3”) topic and classic tiered topic architecture

For good reason - the vast majority of Kafka data wants to be stored in a lake 👇

In 2025 Aiven shared that across their 4000+ cluster fleet:

• ~60% of their sink Connectors target Iceberg-compliant, object-store sinks (DBX, Snow, S3)

• ~85% of their total sink connector throughput is lake-bound. i.e the majority of data volume goes there

If all Kafka data is already in S3, and the data is structured, and the year is 2026… why isn’t it in an Open Table Format?

…

The LakeStream 🌊🐟

StreamNative coined the term for what many of us have been watching unfold - the convergence between analytical and operational workloads.5

Lakestream - an architecture that treats event streams as a first-class lakehouse primitive.

In other words - one that integrates Kafka with Open Table Formats natively.

This is a very compelling vision, because proper integration would unlock TRUE infinite storage for Kafka.

That is because not only would the data:

[1] be in a super cost efficient location (S3)

[2] be in a super cost efficient format (Parquet compresses extremely well)

[3] but it would also be super useful to your whole organization: any engine can query it directly without having to duplicate the data, without transformation and without the organizational & operational pain of having to go through Kafka.

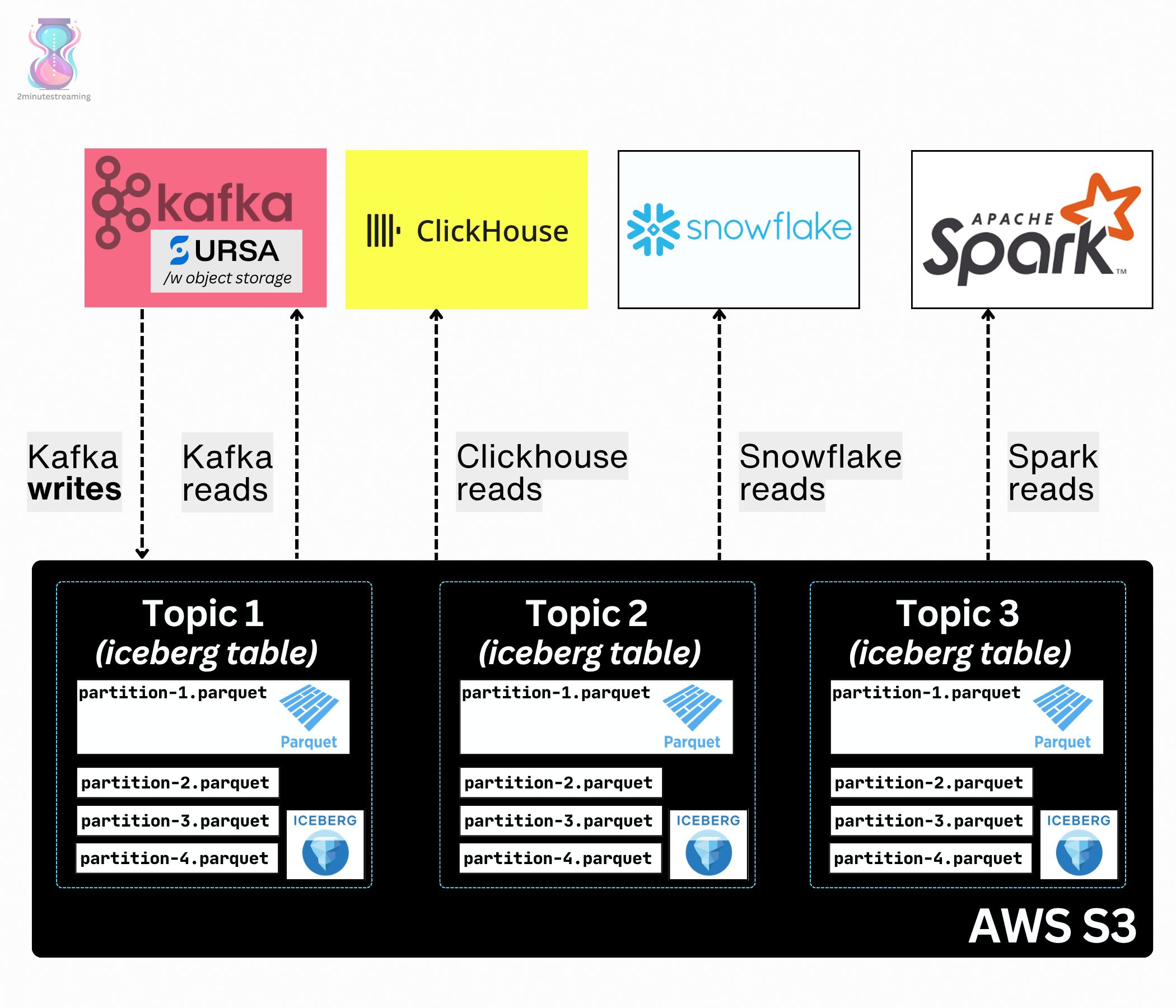

a rough, ambitious vision of a lakestream-type stack

Like it or not, today’s Kafka architecture holds your data hostage and limits its usefulness.

Segments are kept in S3 but only Kafka can read the log segment’s storage format

Kafka clients require some random Kafka-specific “schema registry” to get the authoritative reference for what format that data is in.

Guys, it’s 2026. Why can’t I create a Kafka topic with a simple SQL CREATE TABLE command and have my query engine read it? Databricks’ Zerobus already does this.

👉 an example done right: Ursa

I most recently dove deep into Ursa (for Kafka), which was an announcement from StreamNative7 that coined this LakeStream idea.

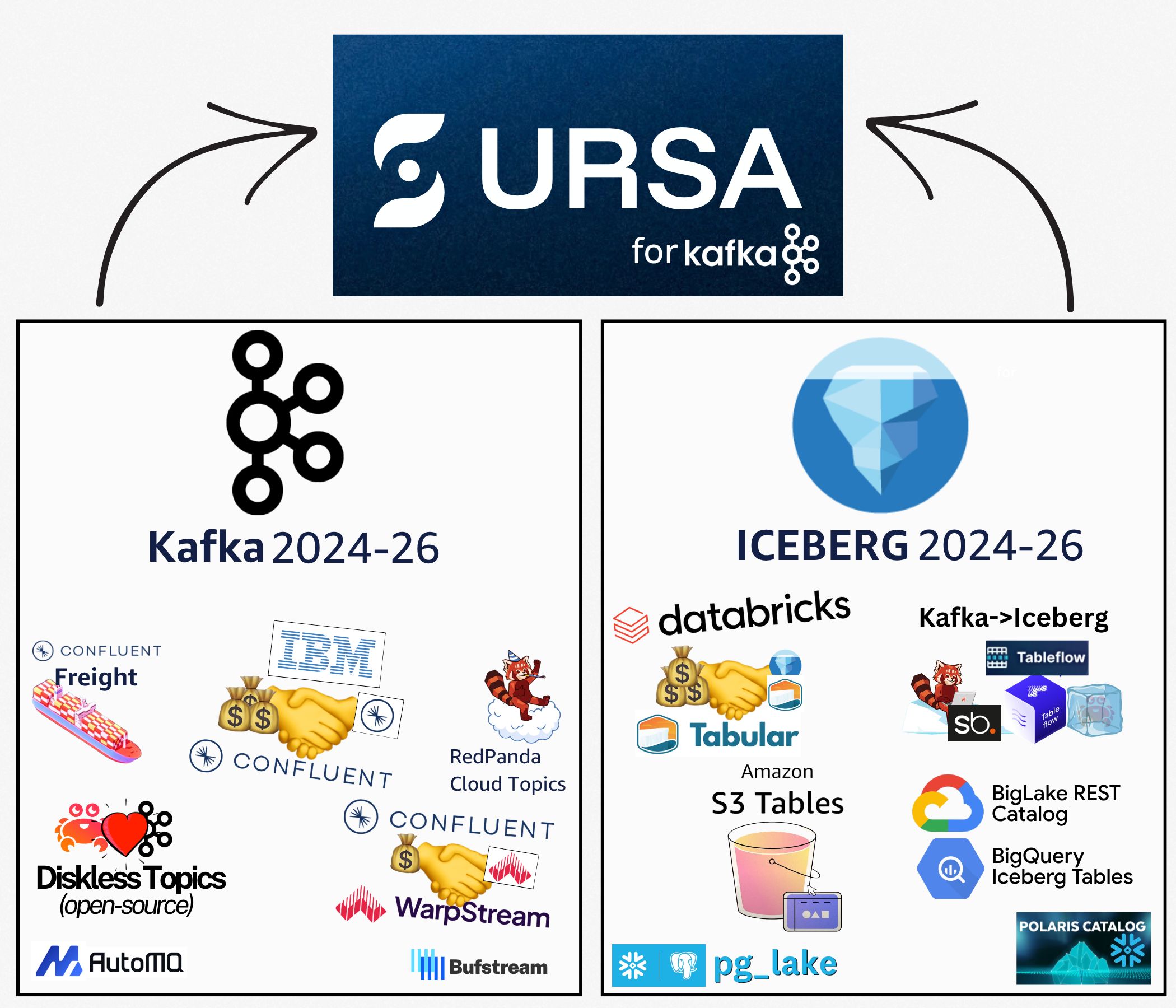

It immediately clicked for me because I see it as the natural convergence of two major trends I’ve been watching in the data space for the last two years:

massive price wars & consolidation on the Kafka side, fierce table format adoption on the Iceberg side; In essence: diskless + iceberg is inevitable

In a few words, Ursa-for-Kafka9 is a minimally-invasive Kafka fork that adds a new topic type to Kafka which you enable with a per-topic config (ursa.storage.enabled).

These types of topics are entirely diskless and leaderless, and get stored natively in an open table format. That’s all you need for the lakestream vision:

the zero-copy architecture with Ursa topics

Where Ursa stands out most from the other Kafka implementations is the fact it combines topic profiles (diskless or classic) with native open table format support.

As I said - these two trends are inevitable. Diskless topics are coming to Kafka already. The only question is when will we get some form of native OTF support (and from who).

StreamNative plan to open source this fork.8

🎬 Fin

If you learned something from this short read, please help support our growth so we can continue delivering valuable content: share this in your company’s Slack channel.

If it’s your first time, don’t hesitate to subscribe for more such reads: Subscribe

More Ursa? 🔥

While researching, I was leaving some interesting crumbs on social media - here they are:

⚙️ How Writes & Iceberg-native Compaction work in Ursa

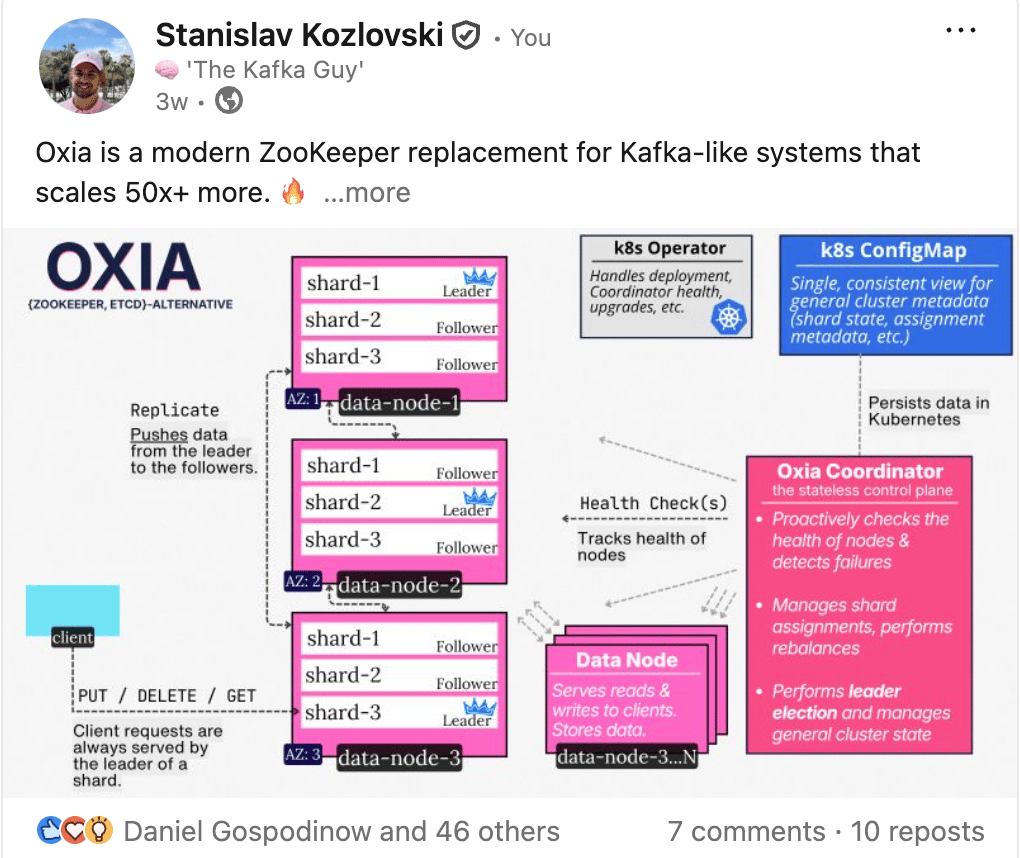

⭐️ Oxia - a modern, cloud-native ZooKeeper replacement

This one surprised me. I ended up really liking this system. I’m surprised it isn’t more popular than it is.

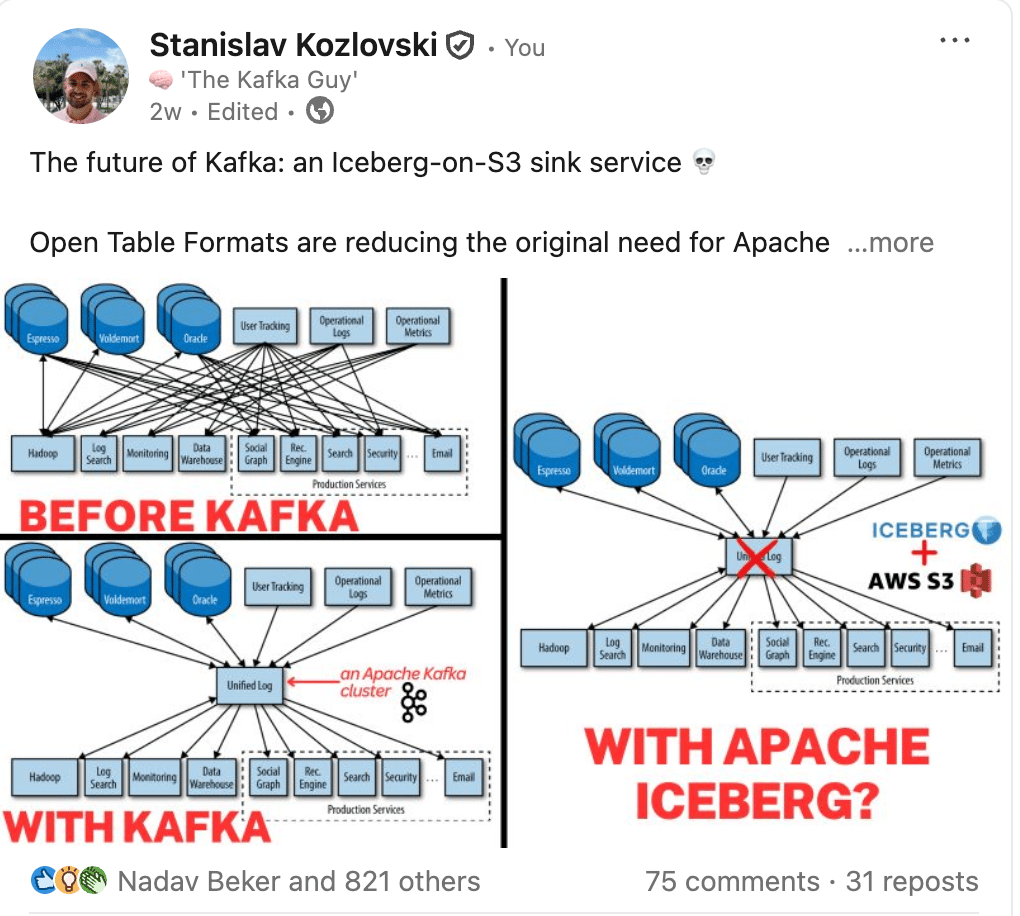

😳 Will open table formats reduce the need for Kafka?

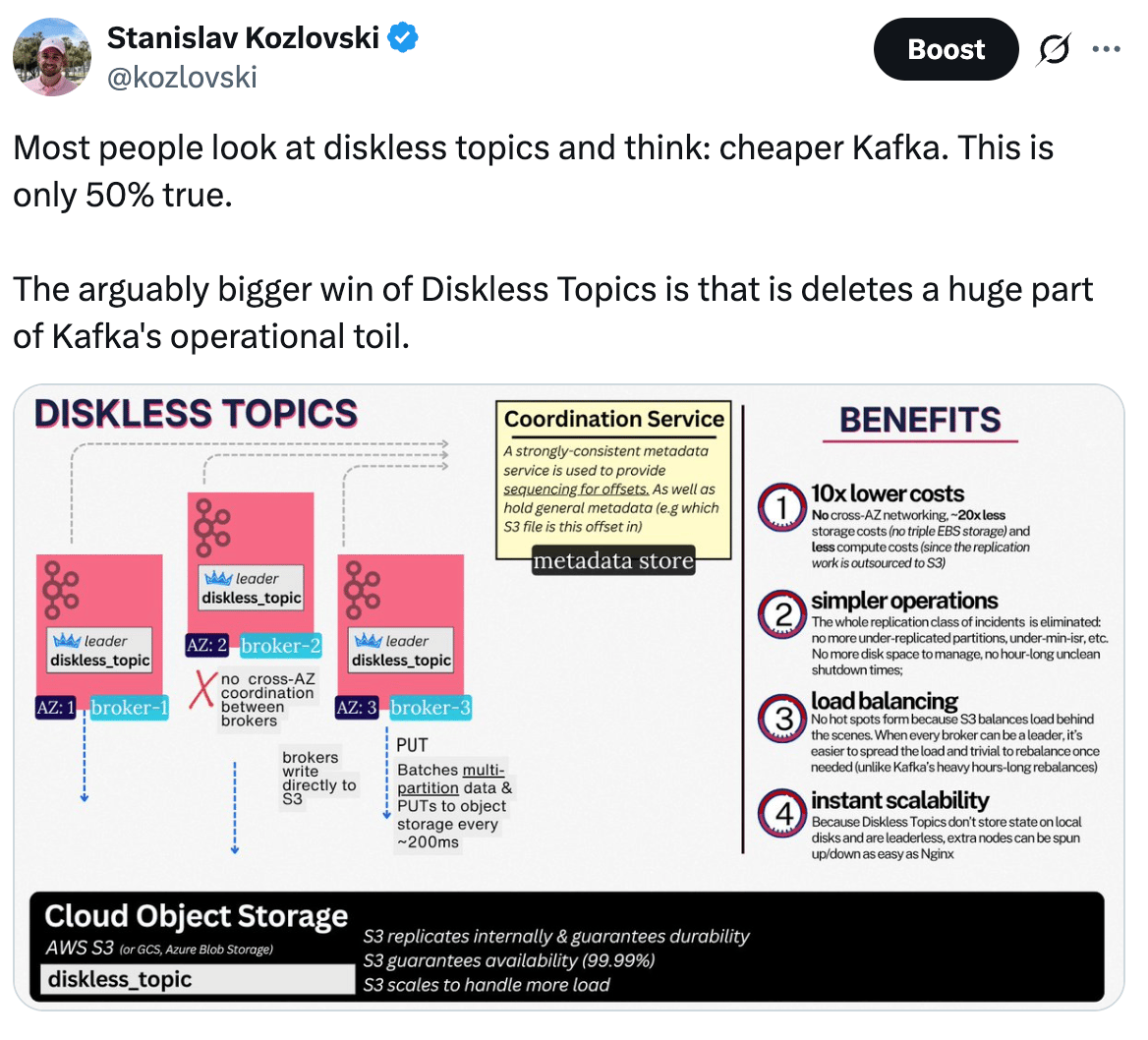

🙌 The Benefits of Diskless Topics

🙌 Covering StreamNative’s Pivot to a Kafka company (well… not really)

YouTube (yes, I’m on there now too)

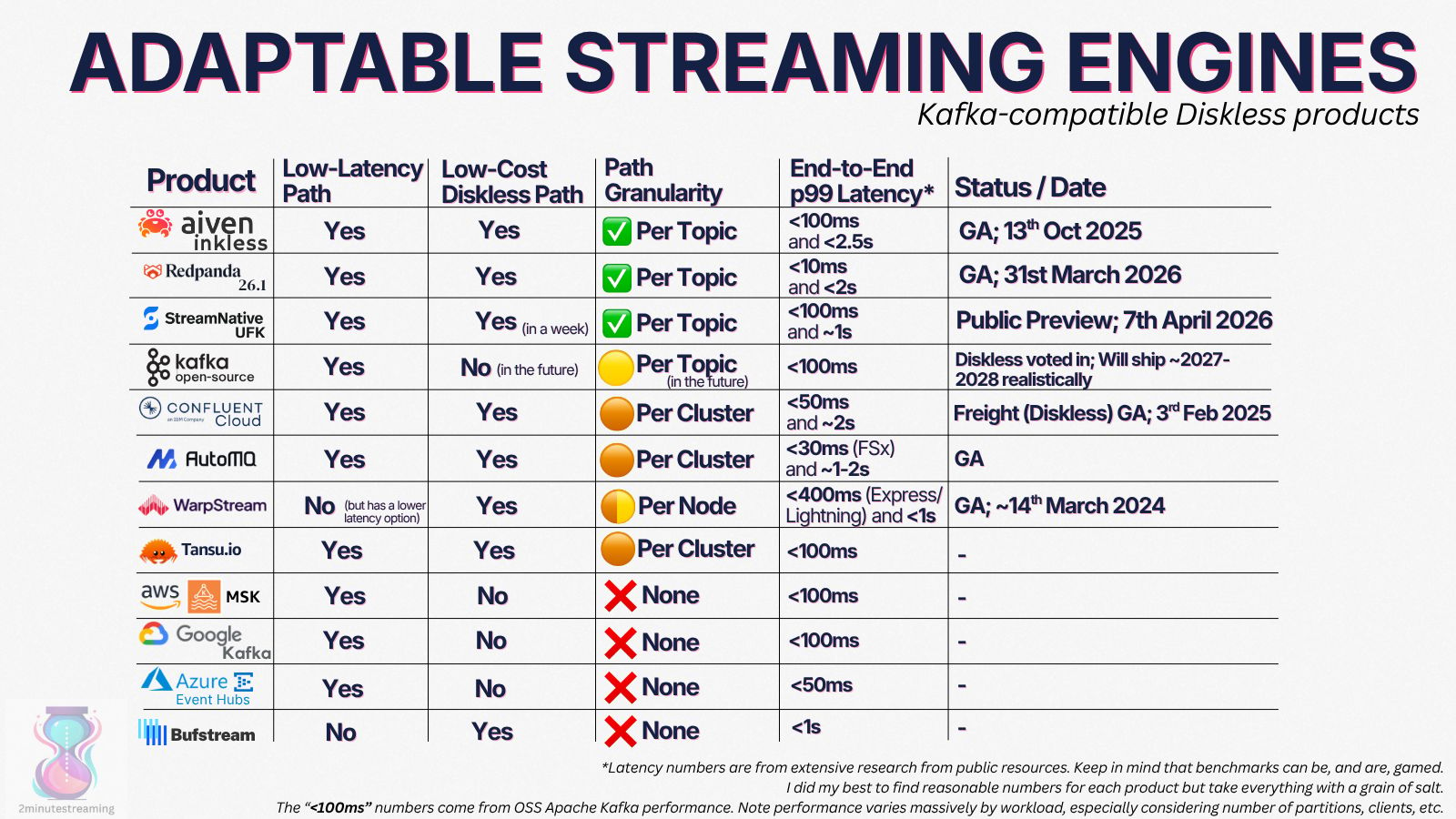

👉 The State of “Adaptable” Topic Profiles in all Kafka vendors

By “adaptable”, I simply mean - does the Kafka engine support both fast (<100ms p99 e2e) and slower (but 10x cheaper) types of topics

🪄 One-shotting a Diskless Kafka in Python

i recommend you try vibe coding from your phone in a park somewhere

Until next time,

~Stan

Apache®, Apache Kafka®, Kafka, and the Kafka logo are either registered trademarks or trademarks of the Apache Software Foundation in the United States and/or other countries. No endorsement by The Apache Software Foundation is implied by the use of these marks.

1 I’m simplifying for clarity. There are other open table formats besides Iceberg/Delta Lake, it can be hosted on any storage system (not just S3), and the files don’t have to be Parquet. But most common seems to be Parquet+Iceberg+S3.

2 The classic stack involves a (disorganized) data lake with structured & unstructured files like PDFs, and a cleaner data warehouse (which you query for analytics). This means you have to govern, secure, update and manage two places. Some orgs prefer to have it in one place - the data lakehouse enables this.

3 Compared to the classic stack, it should come out cheaper. At a minimum, there is less storage paid for because only one copy exists - the source of truth in the lakehouse. Not to mention all the fees that came with ingesting the data into the warehouse. As an example, Uber was thinking of unifying the storage (in the context of Hudi) to save on storage costs back in 2021.

4 We have 20+ Kafka vendors, besides everyone supporting KIP-405 Tiered Storage, many support a direct-to-s3 diskless type of Kafka: Aiven Inkles, AutoMQ, Bufstream, Confluent Freight, Redpanda Cloud Topics, StreamNative Ursa, WarpStream, Tansu

5 This has been happening for a while (Confluent announced Tableflow in 2024), but I think it happened through organic experimentation and adoption of new promising technologies. Nobody has stepped back to rethink how Kafka should look like in a world of where open table formats dominate the data lake space.

Apache Fluss is another log ingestion engine that has tables be central to its storage architecture (although it doesn’t have a Kafka API).

6 The only exception may be for compliance reasons that require historical data to be stored. That’s still “useful” to the organization for clearing regulatory requirements.

7 StreamNative were “the Pulsar guys” for lack of a better name. They were a Pulsar-focused company who through the years pivoted into a lakehouse-streaming company (with Kafka API support, and now a proper Kafka engine)

8 It is said to be open sourced in ~9 months time - which is around January 2027. Until then, they open-sourced the formally-verified leaderless log protocol that Ursa leverages. I had a ton of fun with it to the point where I vibe-coded my own Diskless implementation in Python

9 It’s called “Ursa for Kafka” because it is backed by the Ursa storage engine. This is a really good paper that explains it - https://www.vldb.org/pvldb/vol18/p5184-guo.pdf